This is a re-post of my 2012 article in Dr. Dobb’s Journal, that is still available through the amazing WayBackMachine web archive.

In my article on parallel merge, I developed and optimized a generic parallel merge algorithm. It utilized multiple CPU cores and scaled well in performance. The algorithm was stable, leading directly to Parallel Merge Sort. However, it was not an in-place implementation. In this article, I develop an in-place parallel merge, which enables in-place parallel merge sort.

The STL implements sequential merge and in-place merge algorithms, as merge() and inplace_merge(), which run on a single CPU core. The merge algorithm is O(n), leading to O(nlgn) merge sort, while the in-place merge is O(nlgn), leading to O(n(lgn)2) in-place merge sort. Because merge is faster than in-place merge, but requires extra memory, STL favors using merge whenever memory is available, copying the result back to the input array (under the hood).

Revisit Not-In-Place

The divide-and-conquer merge algorithm described in Parallel Merge, which is not-in-place, is illustrated in Figure 1. At each step, this algorithm moves a single element X from the source array T to the destination array A, as follows.

The two input sub-arrays of T are from [p1 to r1] and from [p2 to r2]. The output is a single sub-array of A from [p3 to r3]. The divide step is done by choosing the middle element within the larger of the two input sub-arrays—at index q1. The value at this index is then used as the partition element to split the other input sub-array into two sections – less than X and greater than or equal to X. The partition value X (at index q1 of T) is copied to array A at index q3. The conquer step recursively merges the two portions that are smaller than or equal to X— indicated by light gray boxes and light arrows. It also recursively merges the two portions that are larger than or equal to X—indicated by darker gray boxes and dark arrows.

The algorithm proceeds recursively until the termination condition —when the shortest of the two input sub-arrays has no elements. At each step, the algorithm reduces the size of the array by one element. One merge is split into two smaller merges, with the output element placed in between. Each of the two smaller merges will contain at least N/4 elements, since the left input array is split in half.

This algorithm is O(n) and is stable. It was shown not to be performance competitive in its pure form. However, performance became equivalent to other merges in a hybrid form, which utilized a simple merge to terminate recursion early. The hybrid version enabled parallelism by using of divide-and-conquer method, scaled well across multiple cores, outperforming STL merge by over 5X on quad-core CPU, being limited by memory bandwidth. It was also used as the core of Parallel Merge Sort, which scaled well across multiple cores, utilizing a further hybrid approach to gain more speed, and providing a high degree of parallelism, Θ(n/lg2n).

In-Place

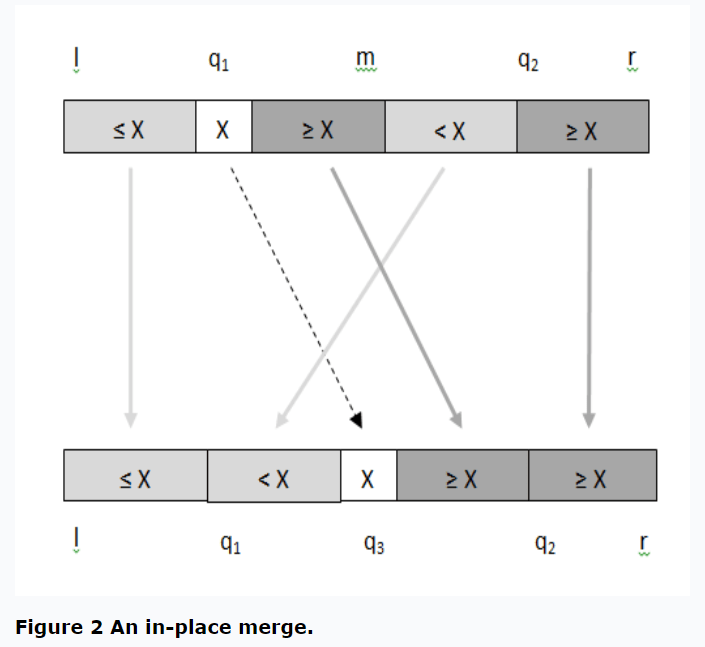

The main idea for in-place merge, illustrated in Figure 2, is based on a modification to the not-in-place merge. Instead of moving a single element X at location q1 to location q3 at each recursive step, all elements between q1 and q2 are moved using In-Place Block Swap (Block Exchange). Let’s go through this in detail.

The top row of Figure 2 shows the input array of elements and three indexes: l, m, and r. The left segment, which is between indexes l and m, inclusive, is pre-sorted. The right segment, which is between indexes m+1 and r, inclusive, is also pre-sorted. Merge algorithm uses these two segments to produce a single sorted result. In-place merge places the result back in the input array between indexes l and r (the bottom row of Figure 1), while using a small constant amount of extra memory. Note that the two input segments touch, whereas the not-in-place merge algorithm had array elements in between the two input segments.

The first step of this divide-and-conquer algorithm is to take the larger of the two input segments, divide it in half to determine the index q1 and its value X. Then a binary search is used within the other segment to determine the index q2, at which all elements to the left are smaller than X, and all elements to the right are larger or equal to X. Thus, each of the two segments have been split into two halves, one less than X and the other greater than or equal to X. These two steps are the same as in not-in-place merge algorithm. The algorithm preserves stability because only the elements that are strictly less than X, but were to the right of X are moved to the left of X.

The next step is to in-place swap the segment q1 to m, inclusive, and the segment m+1 to q2 inclusive, as shown by the diagonal arrows in Figure 2. This swap would be simple if the segments were of equal size, but this occurs rarely. In-Place Block Swap comes to the rescue, because it’s capable of swapping two segments of unequal size, which are touching, in-place. Three algorithms are described in In-Place Block Swap, and the one with the highest performance along with the best parallel scaling needs to be chosen.

The algorithm proceeds recursively by processing (l, q1-1, q3-1) segment and (q3+1, q2-1, r) segments of the bottom row of Figure 1. Note that the array element X at q3 is in its final location and needs no further processing.

Sequential

Listing One shows a sequential divide-and-conquer In-Place Merge algorithm implementation. Lengths of the left and the right input array segments are computed first, determining which segment is larger. When the left (length1) segment is larger, the index of the mid-element is computed (q1). Binary search is used to split the smaller segment (m+1 to r, with length2) using the value of q1, into elements that are strictly smaller than the element at q1 (X in Figure 1). Being strictly less than X at q1, preserves stability. This binary search produces q2, which is the index of the split of the smaller segment (left segment, length2).

template< class _Type >

inline int my_binary_search( _Type value, const _Type* a, int left, int right )

{

long low = left;

long high = __max( left, right + 1 );

while( low < high )

{

long mid = ( low + high ) / 2;

if ( value <= a[ mid ] ) high = mid;

else low = mid + 1; // because we compared to a[mid] and the value was larger than a[mid].

// Thus, the next array element to the right from mid is the next possible

// candidate for low, and a[mid] can not possibly be that candidate.

}

return high;

}

// Swaps two sequential sub-arrays ranges a[ l .. m ] and a[ m + 1 .. r ]

template< class _Type >

inline void block_exchange_mirror( _Type* a, int l, int m, int r )

{

mirror_ptr( a, l, m );

mirror_ptr( a, m + 1, r );

mirror_ptr( a, l, r );

}

// Merge two ranges of source array T[ l .. m, m+1 .. r ] in-place.

// Based on not-in-place algorithm in 3rd ed. of "Introduction to Algorithms" p. 798-802

template< class _Type >

inline void merge_in_place_L1( _Type* t, int l, int m, int r )

{

int length1 = m - l + 1;

int length2 = r - m;

if ( length1 >= length2 )

{

if ( length2 <= 0 ) return; // if the smaller segment has zero elements, then nothing to merge.

int q1 = ( l + m ) / 2; // q1 is mid-point of the larger segment. length1 >= length2 > 0

int q2 = my_binary_search( t[ q1 ], t, m + 1, r ); // q2 is q1 partitioning element within the smaller sub-array (and q2 itself is part of the sub-array that does not move)

int q3 = q1 + ( q2 - m - 1 );

block_exchange_mirror( t, q1, m, q2 - 1 );

merge_in_place_L1( t, l, q1 - 1, q3 - 1 ); // note that q3 is now in its final place and no longer participates in further processing

merge_in_place_L1( t, q3 + 1, q2 - 1, r );

}

else { // length1 < length2

if ( length1 <= 0 ) return; // if the smaller segment has zero elements, then nothing to merge

int q1 = ( m + 1 + r ) / 2; // q1 is mid-point of the larger segment. length2 > length1 > 0

int q2 = my_binary_search( t[ q1 ], t, l, m ); // q2 is q1 partitioning element within the smaller sub-array (and q2 itself is part of the sub-array that does not move)

int q3 = q2 + ( q1 - m - 1 );

block_exchange_mirror( t, q2, m, q1 );

merge_in_place_L1( t, l, q2 - 1, q3 - 1 ); // note that q3 is now in its final place and no longer participates in further processing

merge_in_place_L1( t, q3 + 1, q1, r );

}

}Now, that the split has been found, block swap can be performed, in-place. This moves all elements less than X to the left of X, and all element greater than or equal to X to the right of X. However, elements left of X are in two segments that now need to be merged. Also the two segments of elements right of X also need to be merged. The two recursive calls take care of these two merge operations. The else portion takes care of the opposite case—when the left segment is larger than the right segment.

Note that the in-place merge algorithm used a non-optimized non-hybrid block swap algorithm, for simplicity. An optimized hybrid algorithm is definitely a performance boosting opportunity.

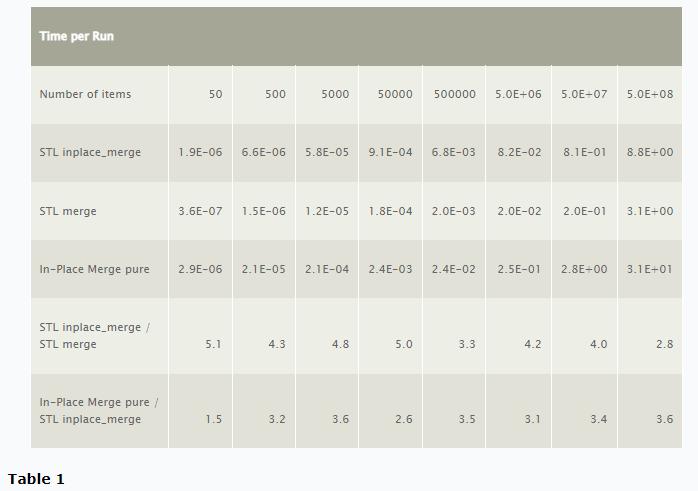

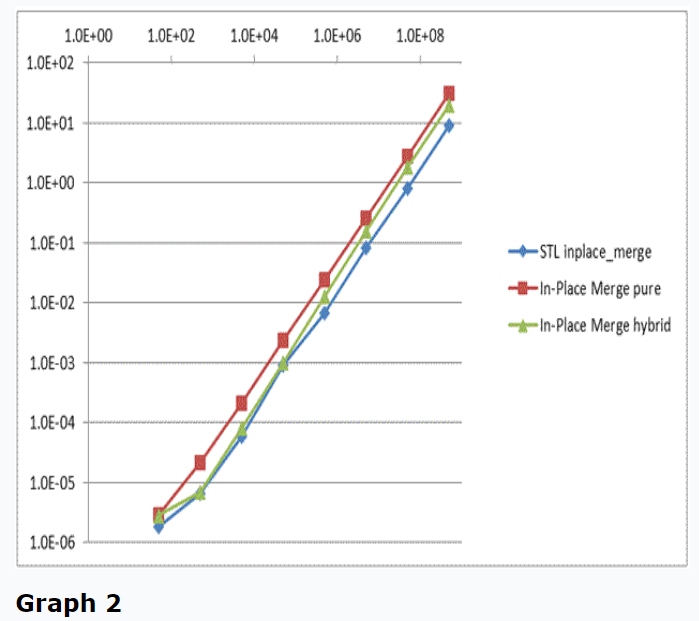

Performance of several in-place and not-in-place merge algorithms is shown in Table 1 and in Graph 1.

STL merge is more than 2.8x faster than STL inplace_merge for all array sizes. The pure divide-and-conquer merge is more than 3X slower than STL inplace_merge for large arrays. This performance disparity may be attributed to STL inplace_merge being an adaptive algorithm, switching to STL merge when additional memory is available to allocate a temporary intermediate buffer and copying the result back to the input array. Also, nearly every algorithm that has been explored so far has performed poorly in its pure form. So, let’s develop a hybrid in-place merge.

Listing Two shows such a hybrid in-place merge algorithm. STL inplace_merge is used as the recursion termination case—processing small sub-arrays.

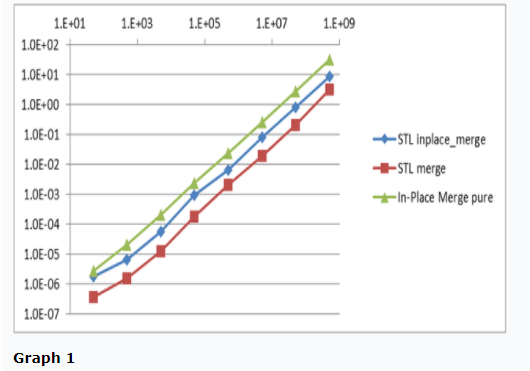

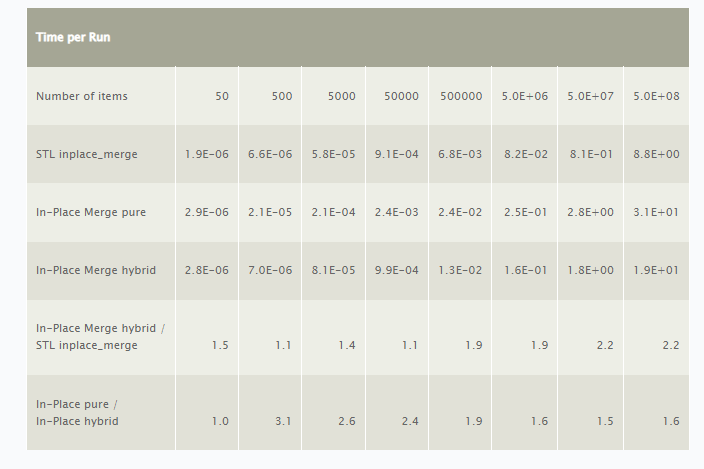

Performance of the hybrid algorithm is shown in Table 2.

template< class _Type >

inline void merge_in_place_1( _Type* t, int l, int m, int r )

{

int length1 = m - l + 1;

int length2 = r - m;

if ( length1 >= length2 )

{

if ( length2 <= 0 ) return;

if (( length1 + length2 ) <= 8192 ) { std::inplace_merge( t + l, t + m + 1, t + r + 1 ); return; }

int q1 = ( l + m ) / 2; // q1 is mid-point of the larger segment

int q2 = my_binary_search( t[ q1 ], t, m + 1, r ); // q2 is q1 partitioning element within the smaller sub-array (and q2 itself is part of the sub-array that does not move)

int q3 = q1 + ( q2 - m - 1 );

block_exchange_mirror( t, q1, m, q2 - 1 ); // 2X speedup

merge_in_place_1( t, l, q1 - 1, q3 - 1 ); // note that q3 is now in its final place and no longer participates in further processing

merge_in_place_1( t, q3 + 1, q2 - 1, r );

}

else {

if ( length1 <= 0 ) return;

if (( length1 + length2 ) <= 8192 ) { std::inplace_merge( t + l, t + m + 1, t + r + 1 ); return; }

int q1 = ( m + 1 + r ) / 2; // q1 is mid-point of the larger segment

int q2 = my_binary_search( t[ q1 ], t, l, m ); // q2 is q1 partitioning element within the smaller sub-array (and q2 itself is part of the sub-array that does not move)

int q3 = q2 + ( q1 - m - 1 );

block_exchange_mirror( t, q2, m, q1 ); // 2X speedup

merge_in_place_1( t, l, q2 - 1, q3 - 1 ); // note that q3 is now in its final place and no longer participates in further processing

merge_in_place_1( t, q3 + 1, q1, r );

}

}

In-place hybrid merge implementation is over 1.5x faster than the pure merge, for large arrays. Interestingly, the hybrid approach for in-place merge yields less gain in performance than it did for not-in-place algorithm, where over 8.5x gain was obtained for large arrays, bringing the performance of the divide-and-conquer algorithm to nearly that of the “simple merge” (see Parallel Merge page 2, table 3). One possible reason is that in-place merge algorithm performs more memory accesses, in the block swap, becoming memory bandwidth bound instead of computationally bound. This effect is visible in Graph 2, where the performance on the hybrid algorithm starts out equal to STL inplace_merge and drifts toward in-place merge pure as the array size increases.

The order of the sequential divide-and-conquer in-place merge algorithm is O(nlgn), where the divide-and-conquer portion produces a tree with O(lgn) levels, with each level processing O(n) elements. The order of not-in-place merge is O(n).

Parallel

A simple, but only partially parallel algorithm falls out naturally from the implementation in Listing Two. This implementation is shown in Listing Three, where the two recursive calls have been parallelized using the parallel_invoke() of Intel TBB or Microsoft PPL. However, block swap has not yet been parallelized.

template< class _Type >

inline void p_merge_in_place_2( _Type* t, int l, int m, int r )

{

int length1 = m - l + 1;

int length2 = r - m;

if ( length1 >= length2 )

{

if ( length2 <= 0 ) return;

if (( length1 + length2 ) <= 1024 ) { std::inplace_merge( t + l, t + m + 1, t + r + 1 ); return; }

int q1 = ( l + m ) / 2; // q1 is mid-point of the larger segment

int q2 = my_binary_search( t[ q1 ], t, m + 1, r ); // q2 is q1 partitioning element within the smaller sub-array (and q2 itself is part of the sub-array that does not move)

int q3 = q1 + ( q2 - m - 1 );

block_exchange_mirror( t, q1, m, q2 - 1 );

//tbb::parallel_invoke(

Concurrency::parallel_invoke(

[&] { p_merge_in_place_2( t, l, q1 - 1, q3 - 1 ); }, // note that q3 is now in its final place and no longer participates in further processing

[&] { p_merge_in_place_2( t, q3 + 1, q2 - 1, r ); }

);

}

else {

if ( length1 <= 0 ) return;

if (( length1 + length2 ) <= 1024 ) { std::inplace_merge( t + l, t + m + 1, t + r + 1 ); return; }

int q1 = ( m + 1 + r ) / 2; // q1 is mid-point of the larger segment

int q2 = my_binary_search( t[ q1 ], t, l, m ); // q2 is q1 partitioning element within the smaller sub-array (and q2 itself is part of the sub-array that does not move)

int q3 = q2 + ( q1 - m - 1 );

block_exchange_mirror( t, q2, m, q1 );

//tbb::parallel_invoke(

Concurrency::parallel_invoke(

[&] { p_merge_in_place_2( t, l, q2 - 1, q3 - 1 ); }, // note that q3 is now in its final place and no longer participates in further processing

[&] { p_merge_in_place_2( t, q3 + 1, q1, r ); }

);

}

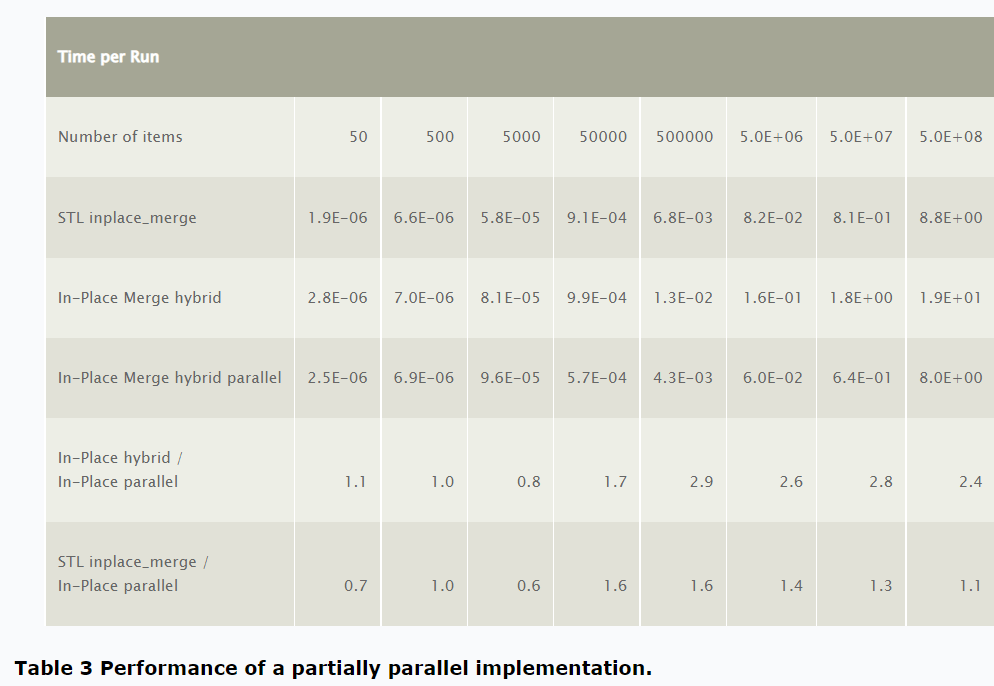

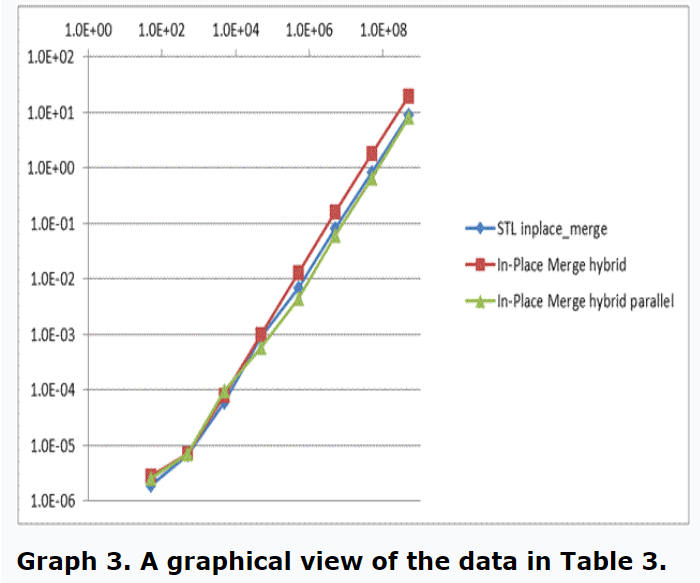

}Table 3 and Graph 3 summarize performance of this partially parallel implementation.

The parallel implementation is the fastest for large arrays, running on a hyper-threaded, quad-core Intel i7-860 processor. It scaled to more than 2.4x for large array sizes over the sequential divide-and-conquer implementation, but only 10% faster than STL inplace_merge() (possibly due to the dynamic nature of STL implementation). So far, in-place merge has not scaled as well as not-in-place merge, most likely due to much higher memory bandwidth usage (in block swap, which has not been parallelized yet).

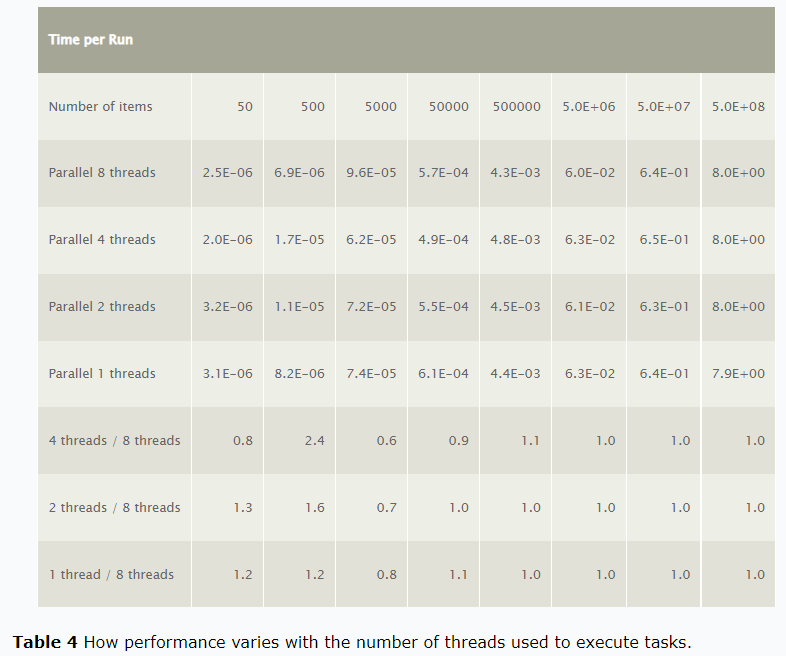

Table 4 shows how performance varies with the number of threads used to execute tasks.

Performance is nearly constant with the number of threads, which is most likely due to memory bandwidth bottleneck limiting parallel performance gain. This needs further investigation, especially because the single thread divide-and-conquer outperforms the sequential divide-and-conquer (although not by a significant amount).

Conclusion

Parallel in-place stable merge algorithm has been developed. The sequential algorithm is not new and goes back to [1], with a similar implementation in STL as inplace_merge(). The parallel implementation is an extension of Parallel Merge algorithm, using block swap/exchage algorithm to swap two inner sub-arrays of unequal size in-place. This in-place algorithm does not scale as well with the number of cores, most likely due to becoming memory bandwidth limited, yet outperforms the sequential algorithm slightly. The order of the algorithm is O(nlgn), and should extend easily into in-place stable parallel merge sort. Further performance gains could come from parallelization of block swap/exchange, along with possible elimination of redundant rotations.

References

[1] S. Dvorak, and B. Durian, “Merging by Decomposition Revisited”, The Computer Journal, Vol. 31, No. 6, 1988.

Victor Duvanenko is a frequent contributor to Dr. Dobb’s on algorithms for parallel programming.